We consider the convergence of the random harmonic series

where is chosen randomly with probability

of being either plus one or minus one. It follows from the Kolmogorov three-series theorem that the series is “almost surely” convergent.

We are all familiar with the harmonic series

That it diverges was proved by Nicole Oresme in the fourteenth century and again in the seventeenth century by Pietro Mengoli (who posed the Basel problem; see below), and by both Jakob and Johann Bernoulli.

The alternating harmonic series converges:

It is a special case of the Taylor series for , first published by Nicholas Mercator (not the cartographer Gerardus Mercator), but discovered independently by Isaac Newton and Gregory Saint-Vincent at about the same time.

Random Harmonic Series

Suppose now that the signs of the terms of the harmonic series are changed in a random manner. We could imagine tossing a coin and choosing plus one for heads and minus one for tails. We get the series

where is chosen randomly with probability 1/2 of being either plus one or minus one. The series

is called a random harmonic series (RHS).

Since there is a likelihood of cancellation between terms of , convergence is a possibility. Indeed, since we expect that “half” the terms are positive and “half” negative, we might expect the RHS to converge, just like the alternating harmonic series

.

We may consider to be a random variable. For each choice of the random set

it takes a real value or diverges to

or

. By a symmetry argument, the expected value of

is

. Since

and

are uncorrelated for

, the second moment is

Since , this series is the solution of the Basel problem, posed by Pietro Mengoli, and solved by Leonhard Euler in 1734:

The sum is the value of the Riemann zeta-function . The standard deviation of

is

.

With the above properties, it follows from a result of Kolmogorov — the Three-Series Theorem — that a random harmonic series converges almost surely, that is, with probability 1. In contrast, the series

may be shown, again by means of the Kolmogorov Three-Series Theorem, to diverge.

Riemann’s Rearrangement Theorem

Schmuland (2003) showed that the probability of having a very large value is extremely small, but non-vanishing. There are no upper or lower bounds on

so the domain of the distribution is the extended real line

.

This also clear from Riemann’s rearrangement theorem: if an infinite series is conditionally convergent, that is

then the terms can be rearranged so that the new series converges to any given real value. Given , there is a permutation

such that

For simplicity assume . We assign

until the partial sum of

exceeds

; then we assign

until the partial sum is less than

; and iterate this process so that the sum of the infinite series is

. Thus, a random harmonic series (strictly, almost any RHS) can be rearranged to have any real value, or to diverge. But the rearranged series is another RHS.

Numerical Experiments

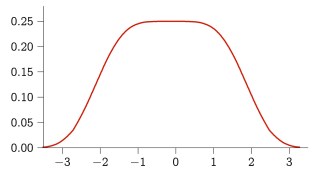

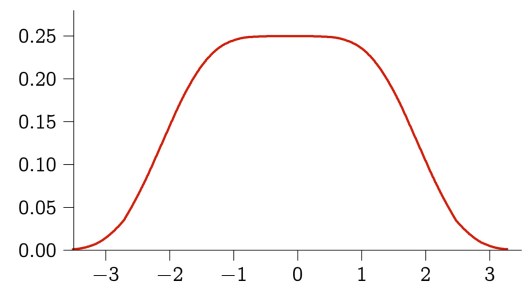

Numerical experiments with large ensembles of random harmonic series show that the sum is generally small. The graph below is the distribution of the random variable . Clearly, it is symmetric about zero, as expected, and falls off sharply for values greater than

. The distribution is very flat near

The value of looks “suspiciously close to 1/4” (Schmuland, 2003). Also,

. Schmuland showed that

differing from by less than

, an incredibly small quantity

Asymmetric series

We have assumed that takes values

and

with equal probabilities. Suppose now that

and

. The balance between positive and negative terms is now disrupted: there are “more” positive than negative terms. We must therefore suspect divergence of the series. Kolmogorov’s theorem shows this to be the case (MSE, 2015).

Sources

Schmuland, Byron, 2003: Random Harmonic Series, Amer. Math. Monthly, 110, 407–416.

MSE: Mathematics Stack Exchange (2015).

Wikipedia article Harmonic series (mathematics): http://www.wikipedia.org/