Next week there will be a post on tuning pianos using a method based on entropy. In preparation for that, we consider here how the entropy of a probability distribution function with twin peaks changes with the separation between the peaks.

Classical Entropy

Entropy was introduced in classical thermodynamics about 150 years ago and, somewhat later, statistical mechanics provided insight into the nature of this quantity. Further insight came with the use of entropy in communication theory. This allowed entropy to be understood as missing information.

Suppose a variable is described by the probability distribution function

. For example, it might be

, a normal distribution with mean

and variance

We can think of

as a measure of our uncertainty, or lack of information. If

is very small, the value is almost certainly close to

. If

is large, there is less information about the value of

: for a given probability level, it occupies a larger interval.

However, is not a good measure of missing information. Suppose the distribution is a combination of two normal distributions:

Now suppose is small, so that the distribution has two sharp peaks. Then

is probably close to either

or

. However, the variance

becomes large, increasing as

where

, even though the information content (that

is close to

or to

) is essentially the same.

Differential Entropy

We need a better measure of the missing information. It is provided by the differential entropy (also called the continuous entropy)

For the normal distribution, we can evaluate the integral, with the result that

So we see that as

and

as

.

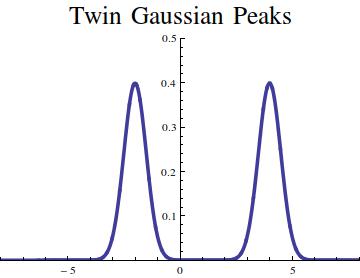

Let us consider the average of twin peaks, one centered at the origin, the other at , each with unit variance:

When the peaks coincide, and the entropy is

. When they are well separated, so that the peaks do not interact, we find that

. In general, the integral can be evaluated numerically. The result is shown here.

In conclusion, we see that a single Gaussian peak has lower entropy than two separated peaks. As the separation grows, the entropy tends to a limit whereas the variance grows without bound.

Next week’s article will apply these entropy ideas to the practical problem of piano tuning!