Given a function of a real variable, we often have to find the values of

for which the function is zero. A simple iterative method was devised by Isaac Newton and refined by Joseph Raphson. It is known either as Newton’s method or as the Newton-Raphson method. It usually produces highly accurate approximations to the roots of the equation

.

We start with , a function of a single variable

. We assume that its derivative

is known or can be calculated. An initial value, or first guess,

is selected. This may be a completely arbitrary value, but it is better to use any available knowledge of the function to make an informed guess. Provided the initial value

is close to a root, the value

given by

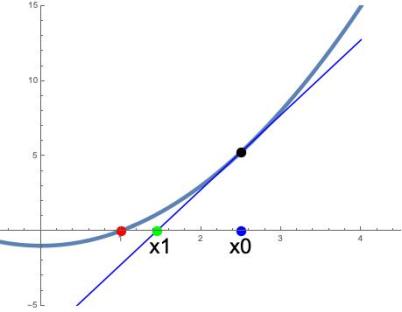

is a better approximation of the root than . Geometrically,

is the point where the tangent of the graph of

at

intersects the

-axis (see figure).

This process may be iterated to obtain successively better approximations:

until the required accuracy is achieved. Normally, the procedure converges very quickly. Indeed, convergence is quadratic, with the number of accurate digits doubling at each stage.

A Simple Example: Roots of Numbers

Suppose we want the square root of a number . We consider the function

and seek the value of for which this vanishes. Let us put

. The derivative of

is immediately available:

. Then the iterative procedure is

Simplifying, we get the algorithm

We can easily iterate this.

For example, if we take , the successive approximations, to five decimal places, are

. The exact value is

.

Wider Applications

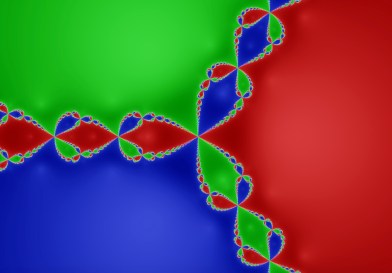

Newton’s method can be applied to find the zeroes of functions of a complex variable. Each zero has a basin of attraction in the complex plane, comprising the set of all initial values for which the method converges to that zero. For many complex functions, the boundaries of the basins of attraction are fractal in nature.

This figure shows the three basins of attraction for the function . The polynomial equation

has three roots, at

,

and

. If the initial value

is in the red region, the Newton-Raphson iterations converge to

. The red region is the basin of attraction for

. Likewise, initial values in the blue and green regions converge to the two complex roots. The figure indicates the intricate nature of the interfaces between the three regions: the interfaces are fractals.

Newton’s method can also be used to solve systems of equations. Suppose . The inverse of the

Jacobian matrix

is then used in place of the reciprocal of the derivative, so that

Rather than computing the inverse of the Jacobian matrix, we solve the system of linear equations

for the change .

For several other applications of the Newton-Raphson method, see the Wikipedia articles referenced below.

Origins of the Method

A special case of Newton’s method, the Babylonian root-finding method, has been known since ancient times. It was used for calculating square roots:

Newton’s method was first published in 1685 in A Treatise of Algebra both Historical and Practical by John Wallis. Earlier, in 1669, Isaac Newton had considered a special case of the method.

In 1690, Joseph Raphson published a simplified description in his Analysis Aequationum Universalis. Raphson described the method in terms of the successive approximations instead of the more complicated sequence of polynomials used by Newton. In 1740, Thomas Simpson described Newton’s method as an iterative method for solving general nonlinear equations — not just polynomials — essentially in the form presented above (see Wikipedia article “Newton’s Method’).

Joseph Raphson

Very few details about Joseph Raphson are available. The following remarks are based on a cursory glance at a few websites, and may not be completely accurate. The Mathematical Gazetteer of the British Isles states that Raphson studied at Jesus College Oxford and was conferred with a Master of Arts degree in 1692. His writings indicate a high level of scholarship. It seems that he was wealthy, but no will has been found on record. There are some indications that he may have had Irish origins. Raphson published his Analysis Aequationum Universalis in 1690. In this work he presented his simplification of Newton’s iterative method for finding the roots of equations.

Sources

Wikipedia article Newton’s Method .

Wikipedia article Newton Fractal .

Notice: An evening course on recreational maths at UCD, Awesums: Marvels and Mysteries of Mathematics, is now open for booking online (www.ucd.ie/lifelonglearning) or by phone (01 716 7123). HURRY: Course starts next Monday (30/09/19).