A few weeks ago, I wrote about Hyperreals and Nonstandard Analysis , promising to revisit the topic. Here comes round two.

We know that 2.999… is equal to three. But many people have a sneaking suspicion that there is “something” between the number with all those 9’s after the 2 and the number 3, that is not quite “reached” in the limit. Are there infinitesimal numbers lurking amidst the real numbers? And if we admit infinitely small quantities, how do we deal with their multiplicative inverses, which must be infinite? Serious challenges arise when we try to answer these questions.

The debate about infinitesimals rumbled on for centuries after they were used by Leibniz. Euler manipulated them with his usual flair, but lesser mortals stumbled into contradiction and paradox. There was no rigorous theory available until the nineteenth century when Cauchy, Bolzano, Weierstrass and others made the concept of a limit precise. However, this circumvented rather than solved the problem of infinitesimals.

Going to the Limit

If a car is accelerating, its speed is changing from moment to moment. To get the speed at any instant, we measure the change of position over a small interval of time, say one second. Suppose the car moves twenty metres in that second; then we say the speed is twenty metres per second. But, strictly speaking, this is an approximation: what we have is the average speed for the second over which it is measured. The instantaneous speed requires that the measurement interval is vanishingly small.

The branch of mathematics that deals with changes is calculus or, more grandly, analysis. If the position of the car is given at each moment, we say it is a function of time. The speed is then the rate of change of this function and is called the derivative of the function. It is given by the difference in position (a length) divided by the small interval between measurements (a time). But how small is small?

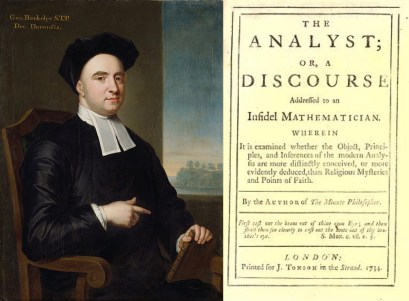

Calculus was devised independently by Isaac Newton and Gottfried Wilhelm Leibniz. Their work gave rise to heated arguments about infinitesimal quantities. Perhaps the most vitriolic criticism came from George Berkeley, who was at one time Bishop of Cloyne. He compared the mathematical use of infinitesimals to arguments in theology. Are infinitesimals finite, and so non-zero, or vanishingly small, and so zero? He mocked their use, describing them as “the ghosts of departed quantities” (see references to Berkeley below).

Leibniz investigated the idea of extending the usual number system (what we call the real numbers) to a larger set containing infinitesimals. These were quantities that are smaller than any real number, yet larger than zero.

It follows that the reciprocal of an infinitesimal (that is, 1 divided by it) must be larger than any real number, and therefore infinite. But Leibniz and his followers were unable to construct a self-consistent extension of the real numbers, and the arguments persisted for more than a century.

In the 1800’s the problems were solved by banishing infinitesimals and defining derivatives by limits. In this way, no infinitely small quantities were used, or even mentioned. Instead, a quantity like the instantaneous speed was defined by the limit of a finite ratio as the time interval between measurements became smaller and smaller (but not zero). The limit technique, brought to a canonical form by German mathematician Karl Weierstrass, is often called the epsilon-delta method, after the two Greek letters customarily used in the definition of a limit. Mastery of the method has been a rite of passage for students of analysis ever since.

Abraham Robinson

Around 1960, Abraham Robinson showed how the set of real numbers could be extended in a consistent manner to include infinitely small and infinitely large quantities. He called the extended system the hyperreal numbers and the new method of calculus that was based on them became nonstandard analysis. This was a complete and satisfactory resolution of Leibniz’ problem. Infinitesimals were back.

One might have anticipated major changes in the way mathematics was done and how it was taught. Nonstandard analysis provided simpler proofs of some fundamental theorems. However, the subtlety of the new approach caused most mathematicians to steer clear of it. They already knew the epsilon-delta method and were reluctant to change.

The extraordinary Hungarian mathematician Paul Erdös opined that “there are good reasons to believe that nonstandard analysis, in some version or other, will be the analysis of the future” [quoted from Robinson, 1996]. Who knows? He may well be right. However, there is no sign of that happening. Nonstandard analysis has not entered the mathematical mainstream. Perhaps the knowledge that Robinson has provided a rigorous foundation is sufficient for the majority of mathematicians, who continue with confidence that, even if they rely on less than rock-solid grounds, their arguments can be “rigorized” if necessary.

Sources

Robinson first published the idea of nonstandard analysis in a paper submitted to the Dutch Academy of Sciences (Robinson 1961):

- Robinson, Abraham, Non-Standard Analysis. Proceedings of the Koninklijke Nederlandse Akademie van Wetenschappen, Ser. A, 64: 432-440.

Robinson’s book came out five years later, and has been reissued several times:

- Robinson, Abraham, 1966: Non-Standard Analysis. Amsterdam: North-Holland Publishing Co.. Princeton University Press edition, 1996, ISBN 978-0-6910-4490-3.

A wealth of material on the “Analyst” controversy of Berkeley is available via theSchool of Mathematics, Trinity College Dublin:

* * *

HALF-PRICE THIS MONTH from Logic Press